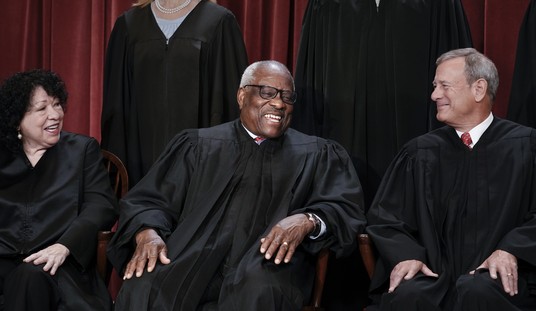

Facebook CEO Mark Zuckerberg arrives before a joint hearing of the Commerce and Judiciary Committees on Capitol Hill in Washington, Tuesday, April 10, 2018, about the use of Facebook data to target American voters in the 2016 election. (AP Photo/Andrew Harnik)

Facebook has been frantically trying to keep it’s head above the hot water they found themselves in since the 2016 elections.

In a rare move, the social media giant revealed a 25-page “rule book” on how it chooses which posts to censor and how it plans to limit illegal or bullying activity on it’s site. This is the first time Facebook has allowed it’s users to see the rules and guidelines used by content monitors.

One of the interesting things brought up at the pre-release press conference last week was the inordinate amount of strain content monitors often work under. American consumers complain about their “offensive” comments being moderated, but there is much more to the job than that.

Many monitors must sift through extremely vile content on a regular basis, including beheading videos and child porn. Facebook revealed that they do have counselors available on-site for their staff, but they haven’t said whether or not they’ve restricted the amount of viewing hours per monitor. Interestingly, YouTube recently instituted a 4-hour viewing limit for their monitors, but it was not clear if Facebook would follow suit.

The most notable change will come in what they deem a “clarification” of previous hate speech rules that caused some controversy when leaked to the media last year. The training documents tried to outline the difference between what could be considered a “protected group” and what would not be considered as such, but the language was bumbling and caused some outrage among those who viewed it as racist. ProPublica did it’s best to explain the issue at the time:

One document trains content reviewers on how to apply the company’s global hate speech algorithm. The slide identifies three groups: female drivers, black children and white men. It asks: Which group is protected from hate speech? The correct answer: white men.

The reason is that Facebook deletes curses, slurs, calls for violence and several other types of attacks only when they are directed at “protected categories”—based on race, sex, gender identity, religious affiliation, national origin, ethnicity, sexual orientation and serious disability/disease. It gives users broader latitude when they write about “subsets” of protected categories. White men are considered a group because both traits are protected, while female drivers and black children, like radicalized Muslims, are subsets, because one of their characteristics is not protected.

The new guidelines try to clarify the issue, although it seems they’ve just widened the concept of “protected groups”, veering slightly from the legal, constitutional definition. From Tech Crunch:

Now [VP of Global Product Management Monika Bickert ] says “Black children — that would be protected. White men — that would also be protected. We consider it an attack if it’s against a person, but you can criticize an organization, a religion . . . If someone says ‘this country is evil’, that’s something that we allow. Saying ‘members of this religion are evil’ is not.” She explains that Facebook is becoming more aware of the context around who is being victimized. However, Bickert notes that if someone says “‘I’m going to kill you if you don’t come to my party’, if it’s not a credible threat we don’t want to be removing it.”

Most of the guidelines remain unchanged. The unusual release seems to be part of an effort to quell growing criticism of Facebook’s “hidden agenda” and secrecy. It may also be an effort to shield the platform from any legal prosecution in cases of violence or hate crimes that have been connected to Facebook posts.

Revealing the guidelines could at least cut down on confusion about whether hateful content is allowed on Facebook. It isn’t. Though the guidelines also raise the question of whether the Facebook value system it codifies means the social network has an editorial voice that would define it as a media company. That could mean the loss of legal immunity for what its users post. Bickert stuck to a rehearsed line that “We are not creating content and we’re not curating content”. Still, some could certainly say all of Facebook’s content filters amount to a curatorial layer.

Is Facebook making an honest attempt to be more transparent about their policies or is this just more smoke and mirrors?

Join the conversation as a VIP Member